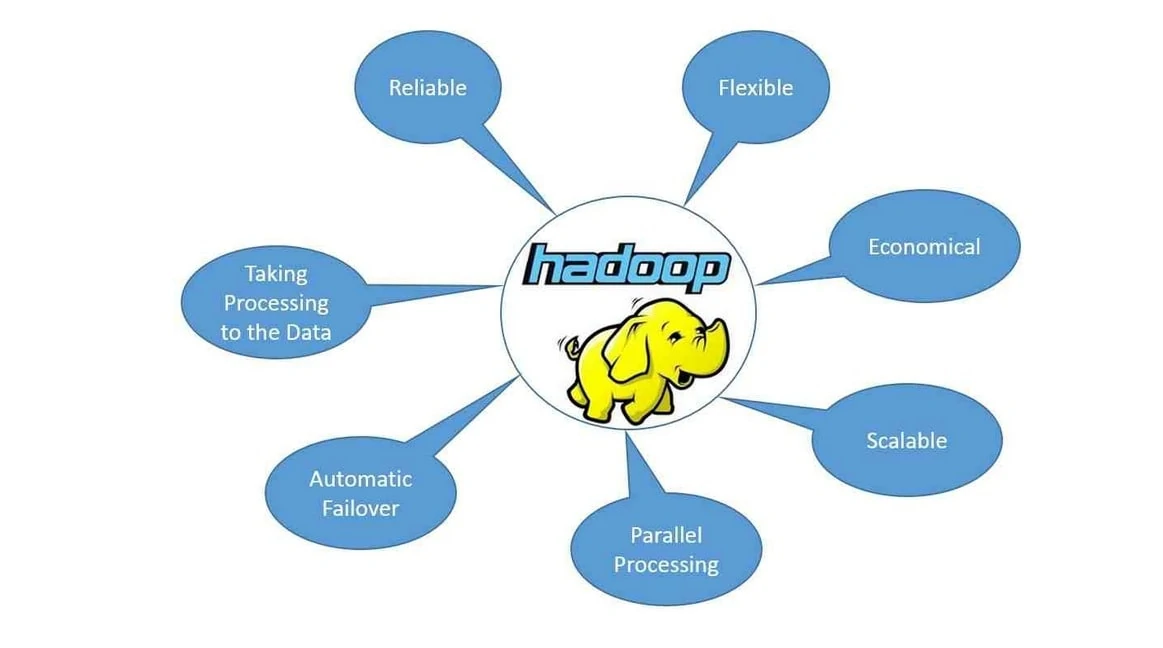

The catapulting popularity of Big Data Analytics calls for cost-effective and fast processing solutions. In this scenario, Hadoop has become the number one choice globally, thanks to its ability for processing voluminous amounts of unstructured data. Besides, Hadoop addresses the limitations of conventional RDBMS and has emerged as a potential catalyst in linking the operational gap between database management and managers.

We have compiled a set of the most frequently asked Hadoop interview questions that will benefit you once you complete the Hadoop online training.

Top 12 Hadoop Interview Questions and Answers:

A Hadoop online training certification course is the first step towards a bright career as a Big Data or Hadoop expert. However, the interviews can be a tough nut to crack if you’re not acquainted with the type of questions asked. So, here are the top 12 Hadoop interview questions:

1. What are the core components of the Hadoop framework?

- The Hadoop framework has the following three core components:

- HDFS: It is a Java-based distributed file system capable of storing all kinds of data without any prior organization.

- MapReduce: It is a model for software programming for parallel processing of massive datasets.

- YARN: It is a framework for resource management, used to schedule and handle distributed applications’ resource requests.

2. What is HDFS and what are its different components?

- HDFS stands for Hadoop Distributed File System, and it is Hadoop’s main data storage unit. Following the master and slave topology, HDFS stores different types of data in the form of blocks in a distributed environment.

3. HDFS has the following two components:

- NameNode: It is the master node responsible for the maintenance of the metadata information of the data blocks stored in HDFS. It controls all the DataNodes.

- DataNode: It is the slave node that stores data in the HDFS.

- What are the different components of YARN?

- YARN has the following components:

- Node Manager: Running on a slave daemon, the Node Manager executes tasks for every single Data Node.

- Resource Manager: It controls the allocation of resources in the cluster and runs on a master daemon.

- Container: It is an amalgamation of various resources such as Network, CPU, HDD and RAM on a single node.

- Application Master: Operating together with the Node Manager, it is responsible for maintaining the user job lifecycle as well as the resource requirements of discrete applications.

4. What are some fundamental differences between Hadoop and RDBMS?

- The major differences between Hadoop and RDBMS include:

- While Hadoop can process structured, unstructured and semi-structured data, RDBMS can only process structured data.

- Hadoop supports Online Analytical Processing (OLAP) and RDBMS supports Online Transactional Processing (OLTP).

- Hadoop follows the policy of schema on read and RDBMS uses schema on write.

- In terms of speed, writes are fast in Hadoop while reads are fast in RDBMS.

- While Hadoop is a free and open-source framework, the RDBMS software comes with a license.

5. What happens when a DataNode fails?

- In case of a DataNode failure, JobTracker and the NameNode first detect the failure. All tasks get re-scheduled on the failed node. Then, the NameNode replicates users’ data to a different node.

6. Explain the role of the different Hadoop daemons.

- In Hadoop, a daemon refers to a process that runs in the background. Hadoop has five of them which are:

- DataNode: It is the slave node that stores the actual data.

- NameNode: It is the master node responsible for storing the metadata for all the files and directories.

- Secondary NameNode: It backs up the NameNode and stores the complete metadata of the data nodes such as data node addresses, properties and block reports of individual data nodes.

- TaskTracker: Operating on the DataNode, it runs the tasks and reports them to the JobTracker.

- JobTracker: It is used for the creation and running of jobs. Running on data nodes, it allocates the job to the TaskTracker.

7. How does Hadoop MapReduce work?

- During the map phase, MapReduce counts the words in every document, and in the reduce phase, it accumulates the data according to the document across the entire collection. In the map phase, the map tasks running in parallel over the Hadoop framework divide the input data into splits for analysis.

8. What is distributed cache in the MapReduce framework?

- Distributed cache is a significant feature of the MapReduce framework used for sharing files across all the nodes in the Hadoop cluster. The files could either be executable JAR files or even properties files.

9. Explain Avro Serialization in Hadoop.

- In Hadoop, Avro is a schema-based and language-independent data sensitization library. Avro Serialization refers to the method of translating data structures’ or objects’ states into a binary or textual form. It uses JSON and allows running of MapReduce programs by providing AvroMapper and AvroReducer.

10. What is the heartbeat in the Hadoop Distributed File System?

- Heartbeat refers to a signal that is used between a DataNode and NameNode and between the TaskTracker and JobTracker. If the NameNode or the JobTracker does not respond to the heartbeat, it is considered that there is some issue with the DataNode or the TaskTracker.

11. What are the most frequently defined input formats of Hadoop?

- In Hadoop, the following are the most frequent input formats:

- Key-value input format: It is used in the case of plain text files whereby the files are divided into lines.

- Sequence file input format: It is used to read sequence files.

- Text input format: It is the default input format in Hadoop.

12. What are the various HDFS commands?

- The various HDFS commands include:

- mkdir

- version

- put

- ls

- get

- copyFromLocal

- cat

- copyToLocal

- cp

- mv

If you’re still wondering whether you should sign up for Hadoop online training, then think about big companies like Google, Yahoo, Facebook and the like who generate terabytes and petabytes of data every day and rely on Hadoop for their data analytics. Looking at the explosive growth and popularity of Big Data, it wouldn’t be naive to consider that Big Data is set to become a long-term game. Learning is the key, and in this light, having a Hadoop online training certification can fetch you good job opportunities with a handsome salary.